- Distributed Grep

- Count of URL Access Frequency

- Reverse Web-Link Graph

- Term-Vector per Host

- Inverted Index

Applying MapReduce

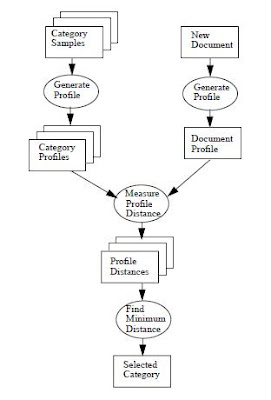

To determine if MapReduce might be a potential solution to a concurrent programming. Here are the questions to ask:- Does the algorithm break down into two separate phases (i.e., Map and Reduce)?

- Can the data be easily decomposed into equal-size partitions in the first phase (i.e., Map)?

- Can the same processing be applied to each partition, with no dependencies in the computations, and no communication required between tasks in the first phase?

- Is there some “mapping” of data to keys involved?

- Can you “reduce” the results of the first phase to compute the final answer(s)?

Volume Rendering

In [2], Jeff A. Stuart el al. used a volume rendering technique called segmented ray casting [5] (or ray partitioning [6]).In [3,4], I and my colleagues have demonstrated an alternative way of parallel implementation of volume rendering on Denali. In Fig. 1, we see that sample points along the rays with the same distance from the image plane are in the same plane. So, instead of casting rays, we can equally well sample the volume perpendicular to the viewing direction at different distances from the image plane. This parallelization scheme is called parallel plane cutting.

In this article, I'll explore the possibility of adapting parallel plane cutting to MapReduce computation.

MapReduce Basics[7,8]

MapReduce is an algorithmic framework, like divide-and-conquer or backtracking. Its model derives from themap and reduce combinators from a functional language like Lisp. It is an abstraction that allows Google engineers to perform simple computations while hiding the details of:- Parallelization

- Data distribution

- Load balancing

- Fault tolerance

- Input data

- MapReduce program

- Configuration information

A MapReduce job implemented in Hadoop is illustrated below:

The data flow of the model is shown in Figure 3. This diagram shows why the data flow between map and reduce tasks is colloquially known as “the shuffle,” as each reduce task is fed by many map tasks.

Map and Reduce Tasks

In this article, we will use Hadoop as the framework for our design consideration. Hadoop supports the MapReduce model which was introduced by Google as a method of solving a class of petascale problems with large clusters of inexpensive machines. Hadoop runs the MapReduce job by dividing it into tasks, of which there are two main types:- Map tasks

- Reduce tasks

The idea behind map is to take a collection of data items and associate a value with each item in the collection. That is, to match up the elements of the input data with some relevant value to produce a collection of key-value pairs. In terms of concurrency, the operation of pairing up keys and values should be completely independent for each element in the collection.

The reduce operation takes all the pairs resulting from the map operation and does a reduction computation on the collection. The purpose of a reduction is to take in a collection of data items and return a value derived from those items. In more general terms, we can allow the reduce operation to return with zero, one, or any number of results. This will all depend on what the reduction operation is computing and the input data from the map operation.

Data Decomposition

As shown in Figure 2, the first design consideration is data composition (or split). There are at least two factors to be considered:- Data locality

- Task granularity vs. parallel overhead cost

Fine-grain parallelism allows for a more uniform distribution of load among nodes, but has the potential for a significant overhead. On the other hand, Coarse-grain parallelism incurs a small overhead, but may not produce a balanced loading. For our volume rendering, there will be an optimal sub-volume size (TBD) that incurs a smaller overhead while produces a better load balancing.

InputFormat

In Hadoop (see Figure 2), user-provided InputFormat can be used for custom data decomposition. An InputFormat describes both how to present the data to the Mapper and where the data originates from. An important job of the InputFormat is to divide the input data sources (e.g., input files) into fragments that make up the inputs to individual map tasks. These fragments are called splits and are encapsulated in instances of the InputSplit interface.In the parallel cutting plane approach, we subdivide volume into sub-volumes for the rendering. Volume data can be stored in different formats. To simplify this discussion, we assume our input data are stored in sub-volumes (i.e., voxels belonging to the same sub-volume are stored consecutively and in an individual file).

Objects which can be marshaled to or from files and across the network must obey a particular interface, called Writable, which allows Hadoop to read and write the data in a serialized form for transmission. If the Objects are Keys, WritableComparable interface should be used instead.

To support our volume renderer, a custom InputFormat with two custom data types (i.e., SubVolumeKey and SubVolumeValue) needs to be created. A high level description of the implementation is provided below:

public class VolumeInputFormat extends SequenceFileInputFormatThe RecordReader implementation is where the actual file information is read and parsed.{ public RecordReader getRecordReader( InputSplit input, JobConf job, Reporter reporter) throws IOException { reporter.setStatus(input.toString()); return new VolumeRecordReader(job, (FileSplit)input); } ... }

class VolumeRecordReader implements RecordReaderIn SubVolumeKey, you need to provide the following minimum information:{ public VolumeRecordReader (JobConf job, FileSplit split) throws IOException { .. } public boolean next(SubVolumeKey key, SubVolumeValue value) throws IOException { // get next sub-volume } public Text createKey() { return new SubVolumeKey(); } public Point3D createValue() { return new SubVolumeValue (); } ... }

- 2D footprint offset (Fx, Fy)

- Transformation matrix (M)

- 3D sub-volume offset (Vx, Vy, Vz)

- Resampling mode (R)

- 3D Zooming and 2D Scaling factors (Z and S)

- Projection function (P; for example max operation)

Map Function

In this article, we will use Maximum Intensity Projection (MIP) as our volume rendering example. In scientific visualization, MIP is a volume rendering method for 3D data that projects in the visualization plane the voxels with maximum intensity that fall in the way of parallel rays traced from the viewpoint to the plane of projection.Same principles used for MIP can be applied to Isosurface Rendering (SR). In SR, a Z-buffer or depth matrix is generated as the result. This matrix is actually a 2D image whose values are the depth values at which an isosurface threshold occurs for a given viewing direction. A shading procedure using depth-gradient shading is then applied to generate a colored image.

In [3], we have demonstrated other parallel volume rendering methods too:

- Multi-Planar Reformatting

- Volume Resampling

- Ray Sum

- max(0, 20, 10, 25, 15) = max(max(0, 20, 10), max(25, 15)) = max(20, 25) = 25

For MIP, the map task includes the following sub-tasks:

- Resample voxels on each cutting plane

- Prepare intermediate results for the consumption of reduce tasks

Sort and Shuffle

Custom data types are needed for the intermediate results (i.e., 2D image tiles):- SubImageKey

- SubImageValue

- 2D footprint offset (Fx, Fy)

- Projection function (P; for example max operation)

- 2D footprint distance (Fz; but this is not needed in MIP)

Reduce Function

The footprint of each sub-volume after projection is a 2D image tile. In Figure 4, we see that image tiles may overlay each other. The final image is created by recombining image tiles. Therefore alignment of image tiles in the projection and recombination process is an important task in this work. If not correct, you may introduce artifacts into the final image. For medical imaging, none of such artifacts can be tolerated.For MIP, the reduce task includes the following sub-tasks:

- Apply projection function (i.e., max) to each pixels on the intermediate results

- Assemble the final image in a global output file with a specified format.

Conclusion

For a divide-and-conquer approach, the construction of the final image requires a number of stages. Image tiles of individual sub-volumes are generated after sampling and blending. A recombination process which takes care of pixel alignments is used to place these tiles into the final image under a specific merging condition. Finally, a post-rendering process called Z merging, with a depth compare done upon merging, can be used to integrate volume images with 3D graphics.Finally, I want to use this article to pay tribute to Dr. Bruce H. McCormick (1928 - 2007) who is my most respected college professor and Ph.D. adviser [10].

References

- Introduction to Parallel Programming and MapReduce

- Mult-GPU Volumne Rendering using MapReduce

- S. Y. Guan and R. Lipes, “Innovative Volume Kendering Using 3D Texture Mapping,” Proceedings of Medical imaging 1996-Image Capture. Formatting, and Display, vol. 2 164. pp. 382-392, Feb. 1994.

- S. Y. Guan, Bleiweiss, A., Lipes, R. “Parallel Implementation of Volume Rendering on Denali Graphics Systems,” Parallel Processing Symposium, 1995. Proceedings., 9th International, pp. 700-706,1995.

- E. Camahort and I. Chakravmty, “Integrating Volume Data Analysis and Rendering on Distributed Memory Architectures,” Proceedings of 1993 Parallel Rendering Symposium, pp. 89-96, San Jose. CA, Oct. 1993.

- W. M. Hsu, “Segmented Ray Casting for Data Parallel Volume Rendering,” Proceedings of 1993 Parallel Rendering Symposium. pp. 7-14, San Jose, CA, Oct. 1993.

- Pro Hadoop by Json Venner

- Hadoop: The Definitive Guide, Second Edition by Tom White

- Yahoo! Hadoop Tutorial

- Brain Networks Laboratory at Texas A&M University

- Learn Hadoop: How To Process Data with Apache Pig

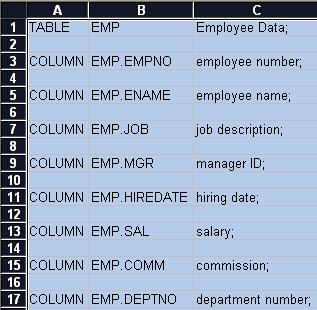

Ename in the search result table is a link which can be used to navigate to the employee detail page of a specific employee. When this link is clicked, a sub-flow (or nested bounded task flow) is called and it displays the

Ename in the search result table is a link which can be used to navigate to the employee detail page of a specific employee. When this link is clicked, a sub-flow (or nested bounded task flow) is called and it displays the